Today’s Large Language Models, such as GPT-4 and LLAMA, have dramatically reshaped how humans interact with technology, enhancing productivity, creativity, and accessibility. Yet, the next wave of evolution in LLMs promises even more profound implications. The future LLMs will continuously learn from interactions, dynamically adapting their responses to become deeply contextual and personal. These models won’t just respond to inputs – they will anticipate needs and integrate seamlessly with our daily lives, blurring the line between user and technology.

Vision LLMs: Bridging Perception & Understanding

Vision-enabled LLMs like GPT-4V represent the convergence of visual and linguistic cognition, taking AI beyond simple perception towards genuine understanding. Future Vision LLMs will interpret complex visual scenarios and provide insightful reasoning, transforming applications in critical fields such as healthcare, automotive safety, and smart urban infrastructure. Instead of simply describing what they see, these systems will deeply understand context, predict outcomes, and recommend actionable insights, fundamentally enhancing human decision-making and creativity.

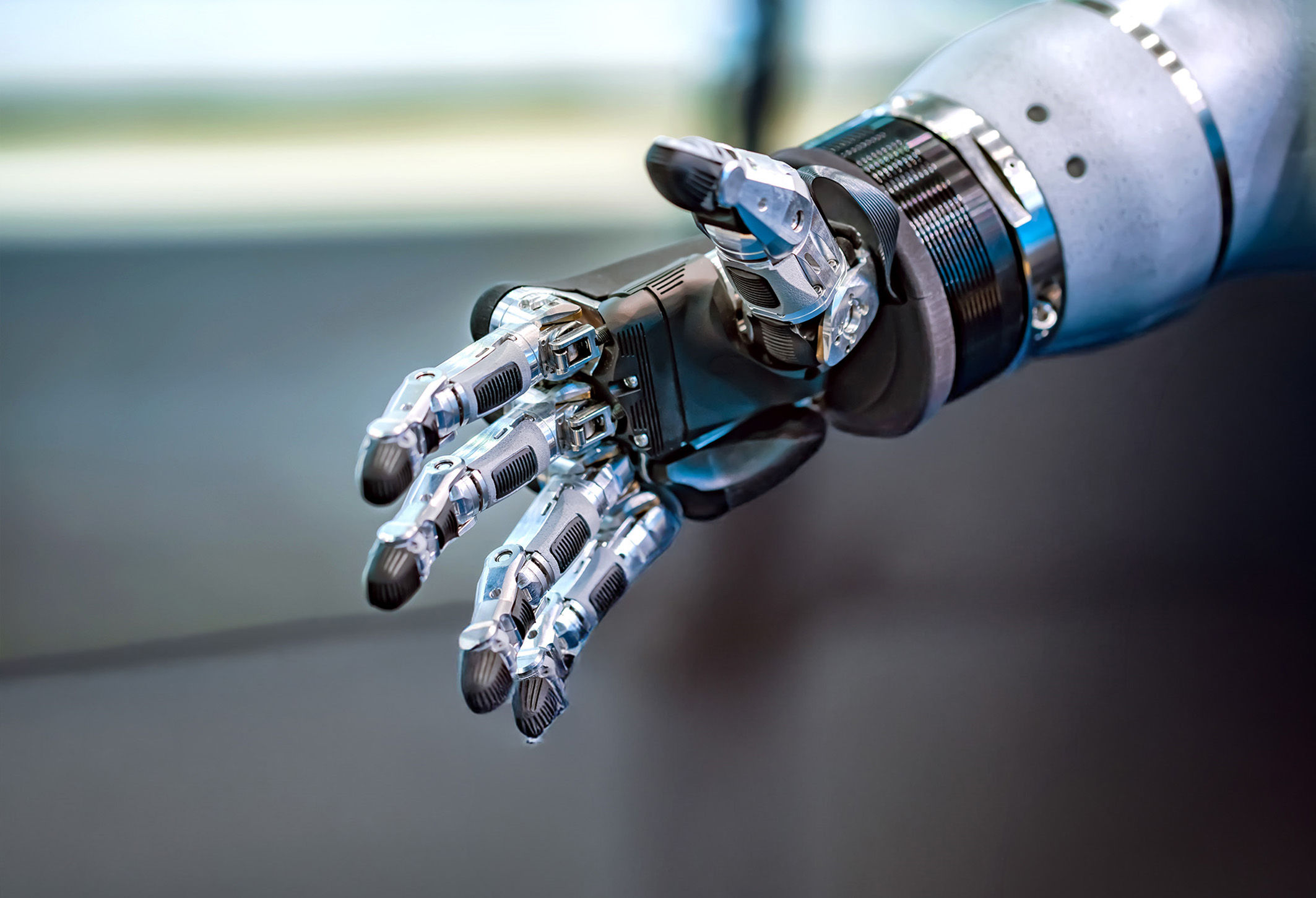

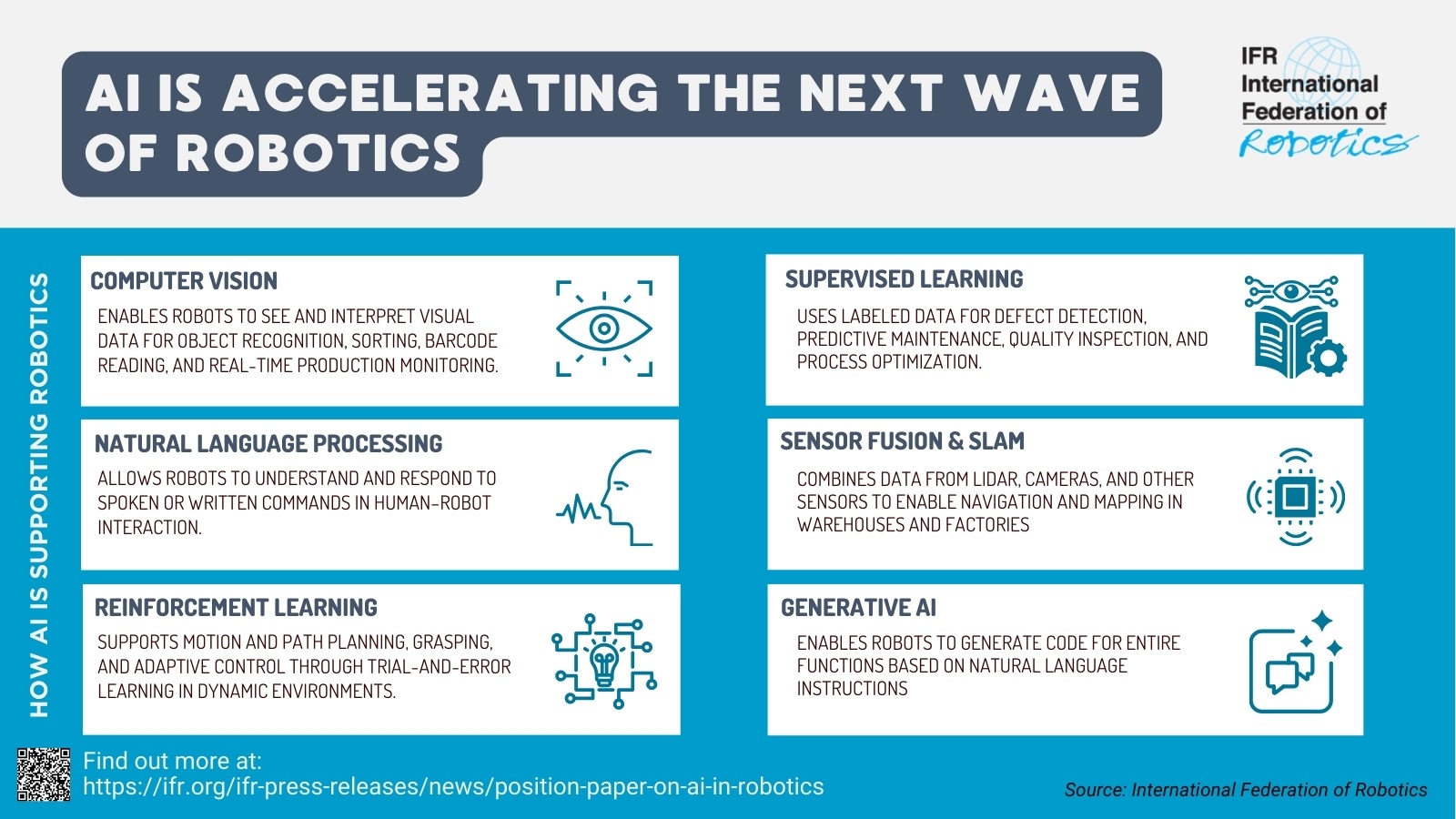

Multi-Modality: Integrating Human-Like Cognition

Multi-modality in AI refers to the integration of various types of data (text, images, audio, and sensor inputs) into a unified cognitive framework. This capability mirrors human intelligence, enabling AI systems to process diverse information simultaneously and coherently. Future multi-modal AI systems will effortlessly blend data streams, resulting in sophisticated understanding and reasoning across different sensory inputs. The implications of this are enormous, ranging from advanced robotics and personalized medical care to innovative forms of media and communication.

The Rise of AI Semiconductor Technologies

Realizing the vision of advanced, intelligent systems requires equally revolutionary AI semiconductor technology. This shift in computing architecture towards edge-based, on-device AI represents one of the most significant transformations in the industry. Companies like DeepX, Intel, Qualcomm, and AMD are at the forefront of this evolution, developing specialized chips designed to handle complex multi-modal AI tasks locally, with unprecedented efficiency. These next-generation AI semiconductors address fundamental challenges associated with latency, bandwidth limitations, and privacy concerns. By bringing powerful AI computation directly to devices, such as smartphones, autonomous vehicles, drones, and wearable technology, we achieve instant responsiveness, better privacy, and significantly reduced energy consumption. Künstliche Intelligenz (KI) verspricht die Revolution der Fertigung, doch in der Praxis scheitern viele Projekte an einer unzureichenden Datenbasis. Warum Sie erst Ordnung schaffen müssen, bevor Sie Künstliche Intelligenz erfolgreich nutzen können. ‣ weiterlesen

Ohne Datenordnung keine Effizienz: Wie Sie Ihre Produktion KI-ready machen

The convergence of advanced LLMs, multi-modal AI systems, and revolutionary semiconductor technologies sets the stage for a new era of intelligent computing. Lokwon Kim, DeepX

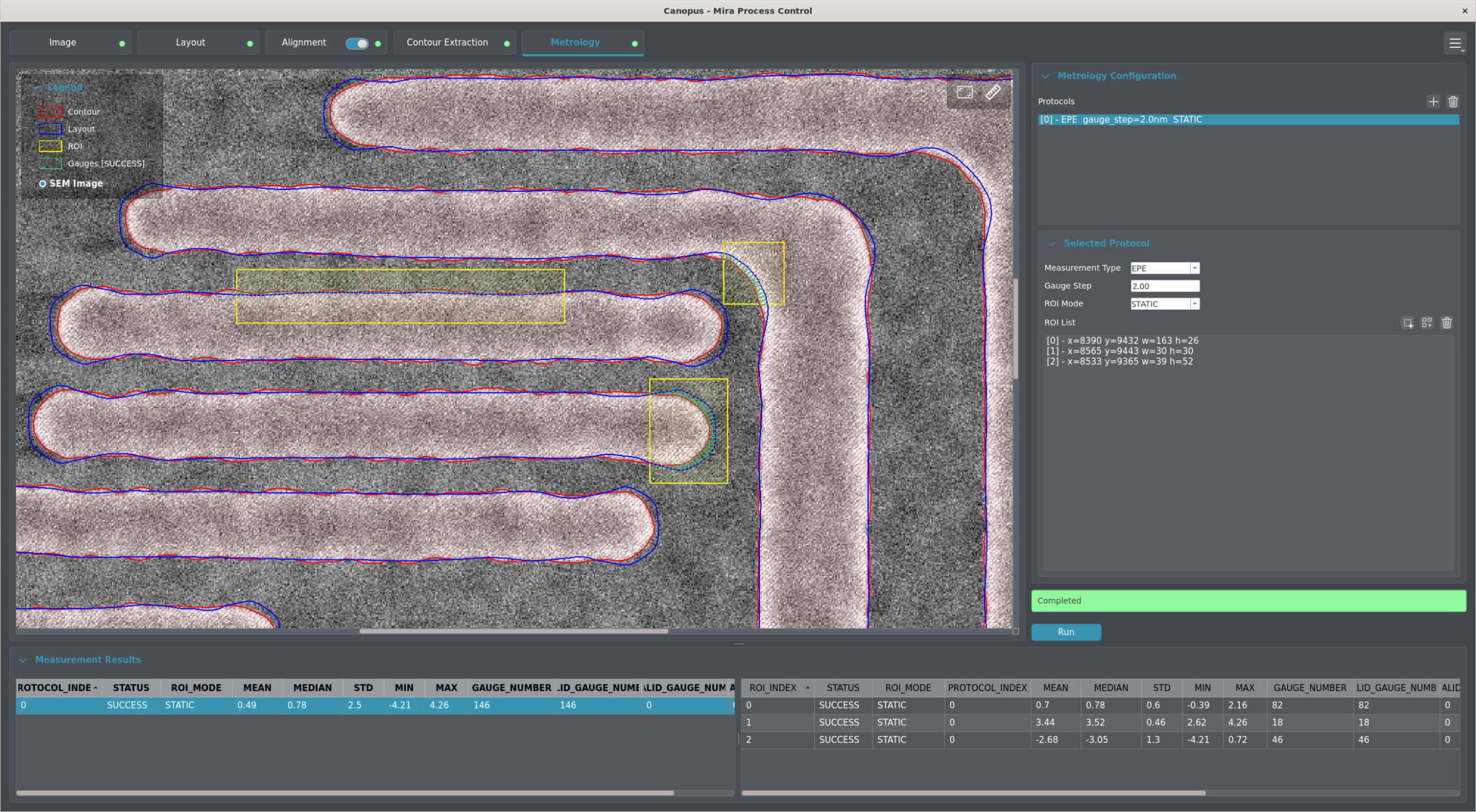

Run LLMs under 5W

Looking beyond current deployments, DeepX is now developing its next-generation chip, the DX-M2 – an on-device AI processor designed to run LLMs under 5W. As large language model technology evolves, the field is beginning to split in two directions: one track continues to scale up LLMs in cloud data centers in pursuit of AGI; the other, more practical path focuses on lightweight, efficient models optimized for local inference – such as DeepSeek and Meta’s LLAMA 4. The DX-M2 is purpose-built for this second future. With ultra-low power consumption, high performance, and a silicon architecture tailored for real-world deployment, it will support LLMs like DeepSeek and LLAMA 4 directly at the edge, no cloud dependency required. Most notably, the processor is being developed to become the first AI inference chip built on the leading-edge 2nm process – marking a new era of performance-per-watt leadership. In short, DX-M2 isn’t just about running LLMs efficiently, it’s about unlocking the next stage of intelligent devices, fully autonomous and truly local.

Memory-Centric AI Architectures

One key innovation in AI semiconductor technology is the shift towards memory-centric computing architectures. Traditional computing paradigms prioritize raw processing speed; however, future AI models demand rapid access to vast amounts of data. Advanced memory solutions, such as High-Bandwidth Memory (HBM4) and emerging technologies like MRAM and ReRAM, are now becoming essential for managing the heavy data flows required by complex AI workloads. Additionally, revolutionary interconnect standards like Compute Express Link (CXL 3.0) and Universal Chiplet Interconnect Express (UCIe) facilitate faster, more efficient data exchanges between computing components, further enhancing the performance and scalability of multi-modal AI applications.

Symbiotic Relationship: Software-Hardware Co-Design

The seamless integration of hardware and software design is crucial for harnessing the full potential of AI. Strategic partnerships between major AI software innovators and semiconductor manufacturers, such as those between OpenAI and Microsoft or Meta’s LLAMA initiative, highlight the essential need for software-hardware co-design. Companies like DeepX recognize this and are actively fostering collaborations that result in customized semiconductor architectures specifically optimized for advanced multi-modal AI tasks.

Intelligent Orchestration: Managing Complex AI Ecosystems

As AI becomes increasingly distributed and embedded in diverse devices, intelligent orchestration tools become vital. These sophisticated software solutions dynamically manage AI workloads, allocating tasks across cloud servers, edge devices, and specialized hardware to optimize performance and efficiency. Technologies such as AWS’s Greengrass exemplify the growing role of orchestrators in ensuring seamless operation across complex, multi-device ecosystems.

Empowering Humanity Through On-Device AI

On-device AI semiconductor technology holds transformative potential not just for businesses and industries, but also for individuals and society at large. Localizing AI computation significantly enhances privacy and data security, ensuring sensitive information remains protected. This technology also democratizes access to advanced intelligence, empowering people in remote or resource-limited areas. Personalized AI assistants embedded in everyday devices will augment individual capabilities, enabling users to achieve higher productivity, make informed decisions rapidly, and unlock new creative potentials. By preserving personal autonomy and providing inclusive access, on-device AI becomes an essential tool for societal advancement.

Conclusion

The convergence of advanced LLMs, multi-modal AI systems, and revolutionary semiconductor technologies sets the stage for a new era of intelligent computing. This integrated approach promises unprecedented capabilities and opportunities, fundamentally reshaping how humans interact with technology. AI semiconductor innovation not only accelerates technological advancements but also ensures these capabilities are accessible and beneficial to all. We are not merely observers of this revolution – we are participants and beneficiaries. Together, we are shaping an intelligent future that enhances human potential, fosters creativity, and ensures a more inclusive, empowered society.